Today we are going to look at a great AMD EPYC server. The Supermicro AS-2015CS-TNR is a single-socket AMD EPYC 9004 “Genoa” server, but the configuration we had was one we had for the first time. We had 384GB of memory with 84 cores. While we are testing this with the 84 core AMD EPYC “Genoa” we will note that this server does work with the AMD EPYC “Bergamo” line with up to 128 cores, but the embargo for those chips in reviews has not lifted. Still, 84 cores 384GB was a fun one to test. Let us take a look at the server.

Supermicro AS-2015CS-TNR External Hardware Overview

The server itself is a 2U chassis from the company’s CloudDC line. CloudDC is Supermicro’s scale-out line that adopts a lower-cost approach than some of its higher-end servers. This is one of those segments that companies like Dell, HPE, and Lenovo struggle in since they tend to compete more with higher-priced more proprietary offerings that CloudDC is designed to be less expensive than.

At 648mm or 34.5″ deep, this is designed to fit in shorter-depth low-cost hosting racks.

The 2U form factor has 12x 3.5″ bays. These are capable of NVMe, SATA, and SAS (with a controller.) While some may prefer dense 2.5″ configurations, we are moving into the era of 30TB and 60TB NVMe SSDs so for most servers having a smaller number of NVMe drives is going to become more commonplace, but disk will still be less expensive.

On the left side of the chassis, we can see the rack ear with the power button and status LEDs. We can also see disk 0 with an orange tab on the carrier to denote NVMe.

Here is a Kioixa SSD in one of the 3.5″ trays. One downside of using a 2.5″ drive in a 3.5″ bay is that it requires screws so one cannot have a tool-less installation as with 3.5″ drives. That is the price of flexibility.

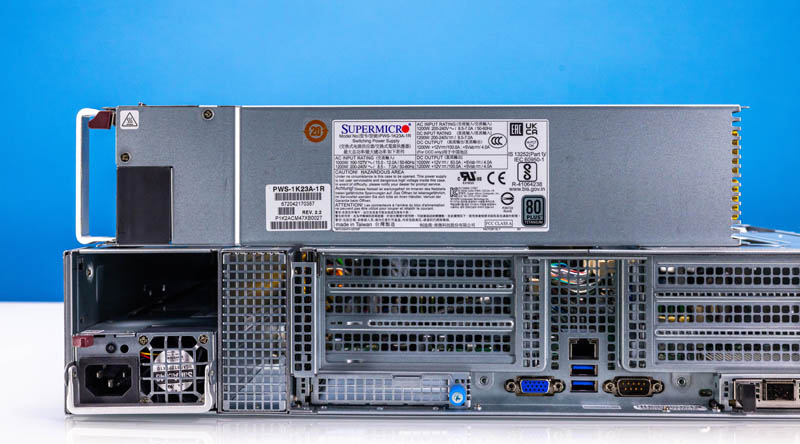

The rear of the server is quite different from many Supermicro designs in the past. It is laid out almost symmetrically, except for the power supplies.

As we would expect from this class of server, we have two redundant power supplies.

Each power supply is a 1.2kW 80Plus Titanium unit for high efficiency. As we will see in our power consumption section, these PSUs are plenty for the server, even adding expansion cards.

In the center of the chassis, we have the rear I/O with a management port, two USB ports, a VGA port, and a serial port.

On either side of that, we have a stack of PCIe Gen5 slots via risers and an OCP NIC 3.0 slot.

Supermicro calls its OCP NIC 3.0 cards AIOM modules and there are two slots. The 25GbE AOC-A25G-i2SM NIC installed in one of these slots is a SFF with Pull Tab design. You can see our OCP NIC 3.0 Form Factors The Quick Guide to learn more about the different types. This is the type that server vendors who are focused on low-cost easy maintenance have adopted so they are common in cloud data centers. In the industry, you will see companies focused on extracting large service revenues such as Dell and HPE adopt the internal lock OCP NIC 3.0 form factor. The internal lock requires a chassis to be pulled from a rack, opened up, and often risers removed to be able to service the NIC. Supermicro’s design, in contrast, can be serviced from the hot aisle in the data center without moving the server. That is why Supermicro and cloud providers prefer this mechanism.

We are going to discuss the riser setup as part of our internal overview. Next, let us take a look inside the system.

Comments